RSX Rover - autonomy and perception

Robotics & Perception · Design Team · 2021–2022

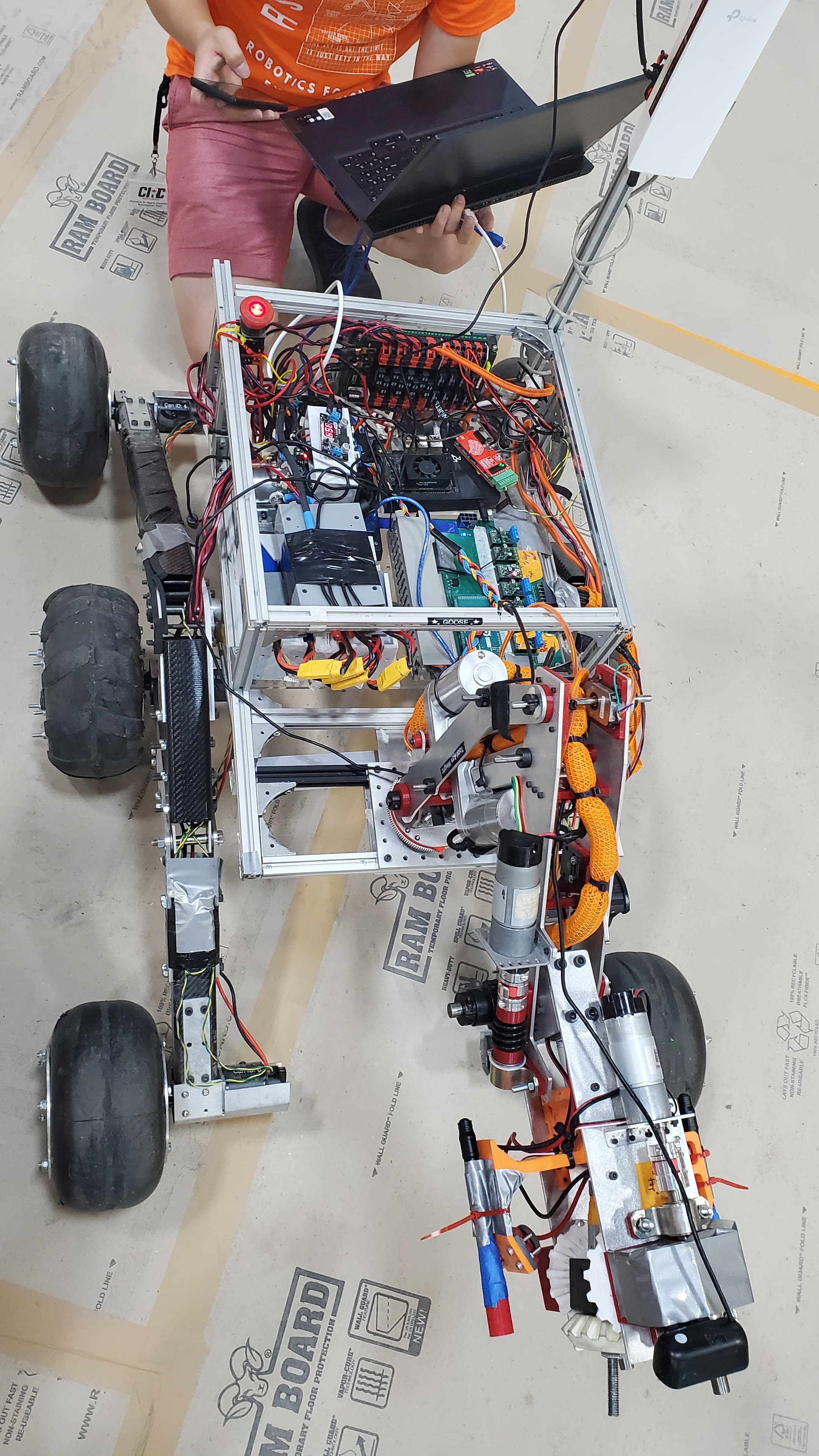

I was a core software and autonomy member of the Robotics for Space Exploration design team, working on a Mars-style rover for competitions such as the Canadian International Rover Challenge and the University Rover Challenge. My work focused on sensor bring-up, perception, and autonomy infrastructure so the rover had reliable data for downstream navigation and decision-making.

- Team: Robotics for Space Exploration design team

- Focus: sensor integration, autonomy infrastructure, and perception support

- My role: LiDAR and IMU bring-up, ROS tooling, debugging, and development workflow support

- Goal: provide reliable sensing and system infrastructure for rover autonomy tasks

Role and context

On RSX, I joined the software and autonomy side of the team, where the rover had to operate in outdoor, unstructured environments while completing tasks related to navigation, science operations, and mission execution. That meant the software stack needed to be reliable enough to support testing in conditions that were far less controlled than a classroom or lab setting.

For CIRC 2022, I worked on bringing up and integrating the LiDAR and IMU stack on the rover. This involved understanding the sensor interfaces, coordinating with mechanical and electrical teammates, and building ROS-based components that could run consistently during field tests.

Autonomy and perception work

My work focused on making the sensing pipeline stable enough for downstream autonomy modules to rely on. Some of the main pieces were:

- LiDAR integration: configuring the sensor, writing launch files, and validating point cloud output across different environments and testing conditions.

- IMU and odometry support: helping integrate inertial data with rover motion estimates to improve pose information for mapping and navigation.

- Visualization and debugging: using RViz and small Python tools to inspect transforms, identify calibration issues, and troubleshoot system behavior before and during testing.

Infrastructure and team impact

Beyond the sensor stack itself, I also helped set up a shared Ubuntu development environment and ROS workspace structure so newer team members could get running more quickly. Standardized launch files, shared tooling, and clearer setup steps made it easier for the team to run repeatable tests and spend less time fighting the environment.

That kind of infrastructure work was important because field-testing time was limited, and a lot of the team's progress depended on being able to debug quickly and trust the software stack before the rover went outside.

What I learned

RSX was one of my first real experiences with how messy robotics can be in practice. Sensors are noisy, hardware connections fail, systems drift out of calibration, and software that works on a laptop can behave very differently once it is running on the robot.

That experience pushed me to write more defensive code, build better debugging workflows, and think about robotics as a full system rather than a collection of isolated modules. It also strengthened my interest in perception and autonomy work where reliability matters just as much as the algorithm itself.

Tech stack

- ROS for robotics integration

- Python for ROS nodes and tooling

- LiDAR and IMU bring-up and calibration

- Ubuntu and Linux development workflow

- RViz for visualization and debugging

- Git and shared ROS workspaces