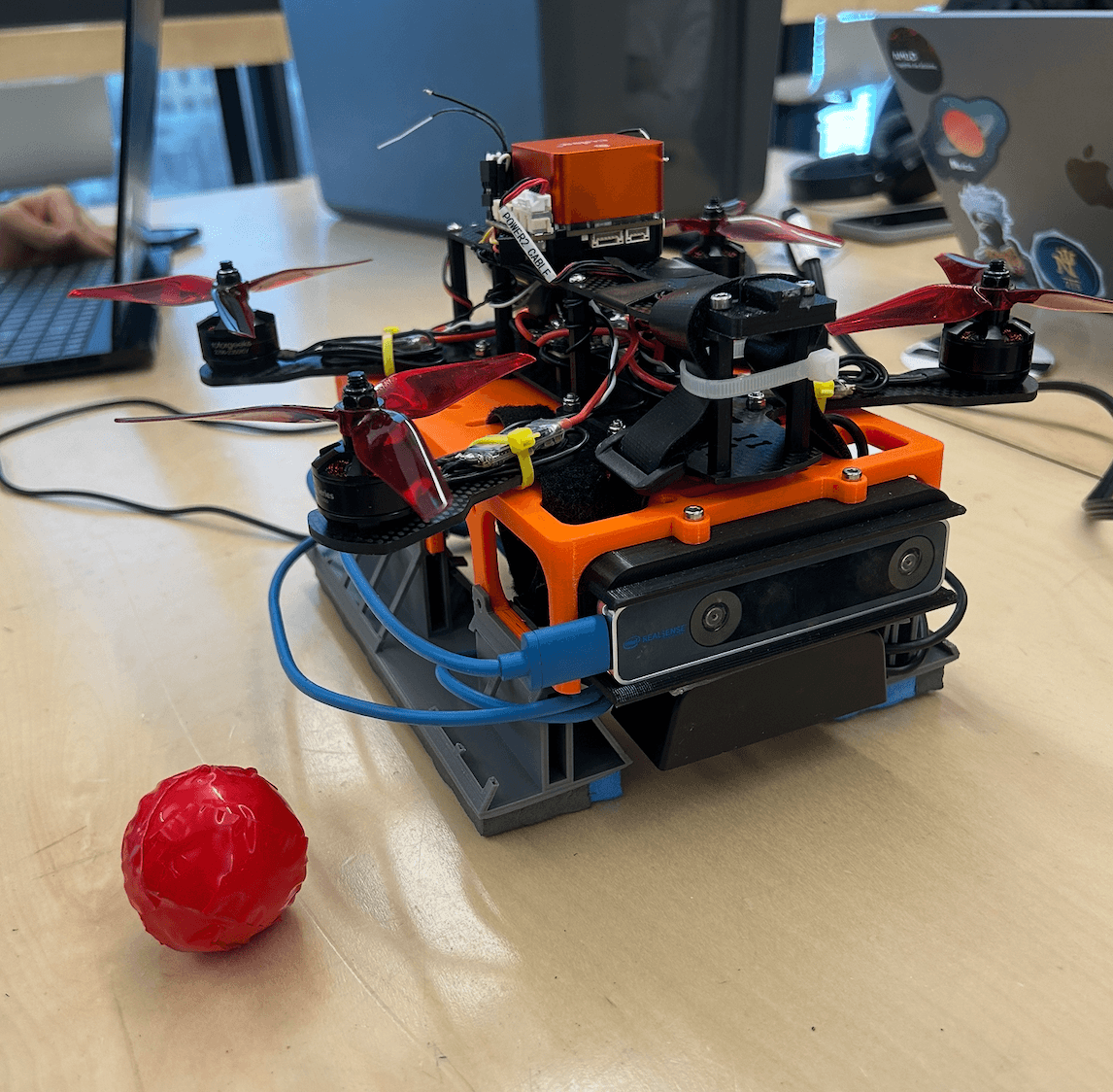

ParSight - golf-ball tracking drone

Robotics & Perception · Capstone · 2024

ParSight is an autonomous drone system designed to help senior golfers track golf balls in real time and locate where they land. The drone follows the ball in flight, then hovers over its final resting position to provide a clear visual marker without interfering with gameplay.

Snapshot

- Platform: Minion Mini H-Quad with Jetson Nano and Orange Cube+

- Perception: RGB camera, HSV filtering, and OpenCV contour detection

- Control: IBVS with a Proportional-Derivative controller

- Detection accuracy: about 95% with roughly 3% false positives

- Latency: about 350 ms end-to-end at 30 Hz

- Final hover error: about 6 cm from the ball's resting position

Problem and motivation

ParSight was built as part of our final-year engineering capstone to address a real accessibility challenge in recreational sports. Senior golfers can struggle to visually track a ball in flight and locate where it lands, especially in visually cluttered outdoor environments.

Our goal was to create a lightweight autonomous system that could follow the ball and then mark its landing point from above. The system needed to work in real time, run on compact onboard hardware, and remain simple enough to be practical for an assistive use case.

System architecture

ParSight follows a Sense, Plan, and Act pipeline running onboard the drone:

- Sense: A downward-facing RGB camera streams video at 30 FPS to an onboard NVIDIA Jetson Nano running ROS2.

- Perception: Each frame is processed using HSV color filtering and OpenCV-based contour detection. Candidate contours are filtered using shape and size constraints to isolate the red golf ball in cluttered scenes.

- Plan and Act: The offset between the ball position and image center is used in an Image-Based Visual Servoing pipeline. A PD controller converts image-space error into position commands for the drone's flight controller.

I was particularly involved in the perception side of the system, including the earlier exploration of a YOLO-based segmentation approach before the team pivoted to HSV filtering for better performance and reliability on the Jetson Nano.

Performance and results

We deployed ParSight on a Minion Mini H-Quad equipped with a Jetson Nano and Orange Cube+ flight controller. The system maintained real-time operation while running the full perception and control loop onboard.

In MVP testing, the drone consistently hovered within about 6 cm of the ball's final resting position. Across trials, the system achieved approximately 95% detection accuracy with around 3% false positives, and end-to-end latency remained close to 350 ms.

The project met 8 of 10 major performance targets, which showed that a lightweight classical vision pipeline could still deliver reliable assistive autonomy without depending on heavier deep learning models or more expensive compute.

What I learned

This project gave me hands-on experience building a complete robotic system that combined onboard perception, control, real-time constraints, and user-centered design. It reinforced how much system performance depends not just on model quality, but on practical choices around latency, hardware limits, and robustness in the real world.

It also taught me the value of iteration and tradeoff analysis. Our shift from a heavier YOLO-based approach to HSV filtering was a good example of choosing the method that best fit the platform and use case, rather than the most complex method available.

Tech stack

- ROS2 on NVIDIA Jetson Nano

- Python and OpenCV for HSV filtering and contour detection

- Image-Based Visual Servoing

- Proportional-Derivative controller

- Orange Cube+ flight controller

- Downward-facing RGB camera at 30 FPS